At Datameister, our codebase and intellectual property have been built around a Monorepo, and we are now seeing the long-term benefits of that architecture. If you’re curious about what those benefits are and how they shaped both our technology and our company, this article is for you.

First, what is a Monorepo? It is short-hand for monolithic repository. The word monolithic comes from the Ancient Greek μόνος (monos, “single”) and λίθος (lithos, “stone”), conveying the idea of a single, solid block. Applied to a repository, it means treating the codebase as one unified whole rather than dividing it across multiple repositories. Although the term itself only gained popularity relatively recently (we can trace back the original Wikipedia entry to 2018), the underlying idea is far from new: it closely aligns with the concept of a shared codebase.

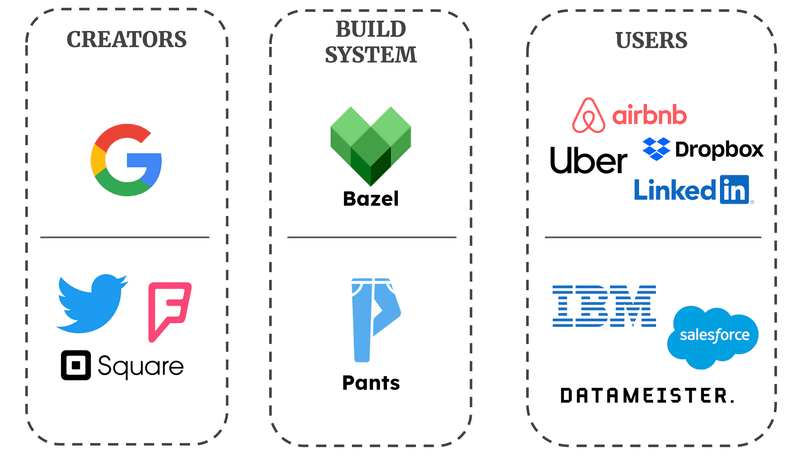

Today, many companies have demonstrated that a Monorepo isn’t just a promising idea, but a very viable choice even for extremely large codebases:

- Google - Why Google Stores Billions of Lines of Code in a Single Repository

- Meta - What it is like to work in Meta’s (Facebook’s) monorepo

- Uber - Controlling the Rollout of Large-Scale Monorepo Changes

- Dropbox - Speeding up a Git monorepo at Dropbox with <200 lines of code

- Canva - We Put Half a Million files in One git Repository, Here's What We Learned

- ...

Build systems

It is important to note that a Monorepo is merely an architectural decision, and its success largely depends on the build system (~the tooling) to construct and maintain it. Don’t worry, we’ll discuss this in more detail later in the article. Here we mention two build systems in particular: Bazel and Pants, as shown in the image below.

Bazel

- Originally Blaze: Google’s internal build tool

- Purpose: tackle scaling issues with extremely large codebase

- Widely adopted in the industry

- Large community: Airbnb, Dropbox, LinkedIn, ...

Pants

- Created by Twitter, Foursquare and Square

- Similar purpose: replace fragmented build tooling by single project

- Growing community: IBM, Salesforce, and... Datameister!

Why choose for a Monorepo?

A quick Google search can give you an extensive list of pros and cons, but that doesn’t really tell you much without any context. That’s because your design choices only pay off when they align with your use-case. In other words, you lose every advantage you don’t make proper use of.

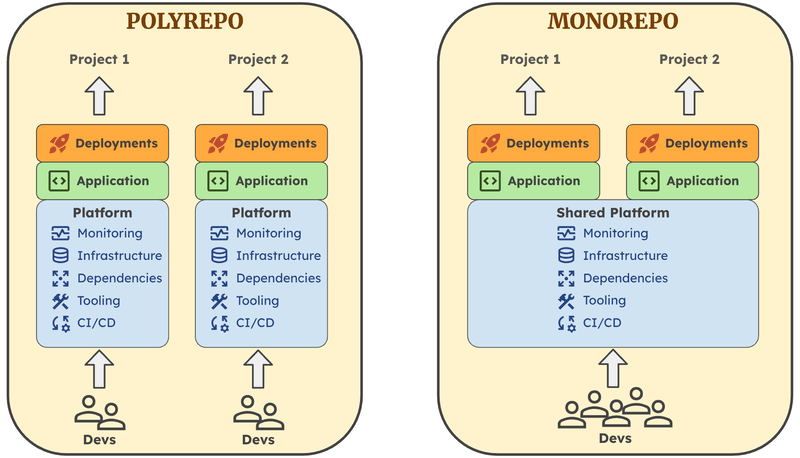

The figure below illustrates the difference between a Monorepo and a decentralized, Polyrepo architecture. In a Monorepo, integrations are built on top of a shared foundation, which allows for reusability across projects. On the other hand, a Polyrepo typically consists of separate vertical stacks with little to no interaction between them.

We’ve identified three core benefits that played a big role in our decision, as they closely align with our mentality.

- Knowledge-sharing

With a centralized codebase, knowledge flows naturally across the whole team. By creating shared, easily accessible libraries, we don’t have to reinvent the wheel for every project. As such, core algorithms, models, and frameworks are readily available. Moreover, everyone can contribute to these libraries, which builds a strong and continuously evolving foundation. - High developer velocity

A Monorepo also enables shared tooling, which ensures everyone is familiar with the development workflow, even when switching between projects. Consequently, slow or repetitive steps can be eliminated which substantially boosts developer velocity. Furthermore, it’s easier to maintain and improve a unified tooling framework in contrast to many different tooling sets. - Consistent quality

The shared infrastructure is definitely a strength, but it also brings with it much greater responsibility. For example, a bug in a shared library can impact all dependent code, which raises the bar for quality. This encourages consistency across the entire codebase, spanning from a coherent coding style to reliable design patterns in more complex tasks.

Now you might wonder, how do we make full use of these advantages?

From idea to product

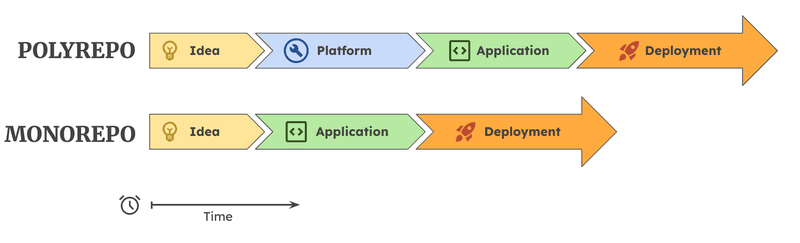

As shown in the figure, the key advantage of our Monorepo setup is how much it compresses the time from idea to production. The reason is simple: the foundation is already in place.

Instead of going through repeated setup and integration steps for every project, developers can immediately focus on building the client-specific application logic. There’s no need to reassemble the surrounding platform (monitoring, infrastructure, tooling, etc.), because it already exists as a cohesive foundation. Rather than copying and patching together setups that were never designed to be universal, we build on a system that was designed from the start to work across all projects.

The result is a fast, iterative workflow:

- ideas quickly become prototypes

- prototypes can be refined in short cycles

- validated solutions can be deployed immediately

Where Polyrepo setups introduce delays through coordination and repeated setup, the Monorepo enables a continuous flow, allowing us to move from idea to production in a fraction of the time.

Build-A-Monorepo

This still leaves one important question: how do you actually set up and maintain a Monorepo in practice? In our experience, this is far from trivial and very much an ongoing process rather than a one-time task.

As mentioned earlier, the success of a Monorepo depends heavily on the build system. While some organizations ended up building this tooling from the ground up (Google being the canonical example with Blaze), we were fortunate not to have to start from scratch. While we initially considered using Bazel as our build system, from personal experience we noticed that its support for Python fell a bit short. For an AI-focused company like ours, with Python as the backbone, this creates a lot of unwanted friction.

Pants, by contrast, is designed with Python as a core use case. It provides strong support for dependency inference, reproducible builds, and fast, incremental workflows, while remaining approachable for developers who are not build-system specialists. This balance has been key for us: the build system is powerful enough to scale with the Monorepo, but accessible enough that developers can reason about it and extend it themselves.

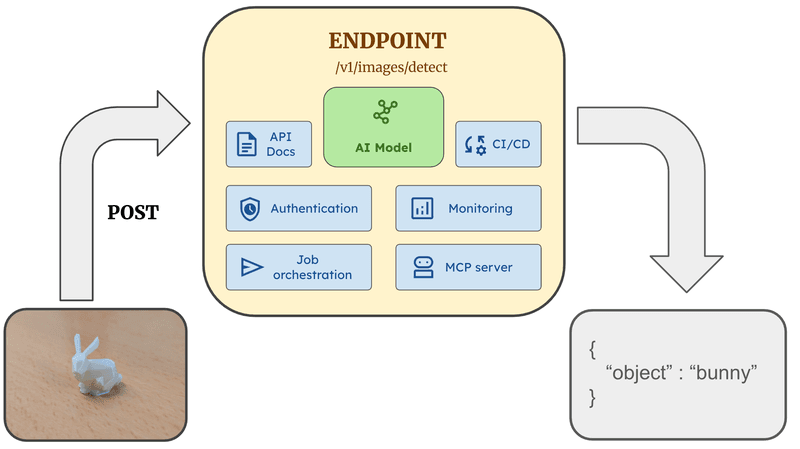

Deploying an endpoint in an afternoon

To make this tangible, let's walk through a toy example. Say we want to deploy a new endpoint that accepts an image, runs an AI model on it, and tells you which objects can be seen in the image. In a traditional setup, this means days of scaffolding before we even get to the interesting part. In our Monorepo, we write one thing: the image processing logic. Everything around it is already in place.

When we spin up a new endpoint:

- API documentation is auto-generated and stays in sync with the code, alongside developer-friendly API collections for quick manual testing.

- Authentication is wired in by default, consistent across all our services.

- Async job orchestration is available when we need it. For heavier workloads, a built-in job system handles processing with status tracking and retries, so the endpoint can accept a request, hand off the work, and let the caller poll for results.

- Structured logging and monitoring come for free. Metrics are collected automatically, and spinning up a monitoring dashboard is merely a few lines of Python thanks to a dashboards-as-code library that plugs straight into our infrastructure.

- MCP integration is a one-liner on top of the existing API, making the endpoint callable as a tool by AI agents without any extra plumbing.

Crucially, none of this is copy-pasted boilerplate. Every endpoint shares the same libraries, the same dependency tree, and the same CI/CD pipeline. When a security patch lands or a dependency gets updated, every service benefits at once rather than quietly drifting apart. The developer's entire focus stays on the thing that actually matters: the model and the business logic. Getting it to production is just a pull request away.

The benefits of our Monorepo extend even beyond these “supporting” components. It’s also how we share and scale research. Instead of letting experiments live in isolated repos, we turn them into reusable building blocks. Ranging from object detection (like our DETR work) to 3D asset generation and real-to-sim scene reconstruction (see Trellis), new ideas quickly become tools that anyone can use in production!

A Double-Edged Sword

It is important to remember that a Monorepo is not a magical, one-size-fits-all solution. It offers strong benefits, but also introduces its own set of challenges.

Scaling

- Tools like Pants help, but growth still adds complexity

- More developers and projects require clear structure and boundaries

- Continuous feedback and disciplined repo management remain essential

Isolation

- Everything is interconnected, which is powerful but risky

- Changes can have wide impact across projects

- Balance is key: isolate where needed, reuse where it helps

Edge cases

- Not all code fits naturally into a Monorepo

- Forcing everything in can be counterproductive

- The Monorepo can still serve as a reference for quality and tooling

In short:

A Monorepo doesn’t remove complexity, but it makes it manageable. With the right tools and engineering culture, it’s a strong foundation, but it will always remain a work in progress.

Conclusion

Looking back, the real benefits of the Monorepo didn’t come from the architecture alone, but from how it shaped the way we work at Datameister. It encourages strong collaboration, pushes us toward higher standards, and lets us move faster with more confidence. Over time, those small advantages compounded into a development environment where research ideas quickly turn into production-ready capabilities, and every new project starts on top of everything we've built before.

That compounding is what our clients end up feeling directly. When we take on a new project, the foundation is already there. What's left to build is the model and the application logic the client actually hired us for. The path from first conversation to a robust deployment keeps getting shorter, because each model we ship sharpens the components underneath it.

After enough cycles, the codebase starts behaving more like an AI factory than a codebase: a system where mature sub-components let us take on harder problems by composing what's already there instead of starting over. It's why we can credibly move into physical AI today, where the work depends on a stack of capabilities that have to be solid individually before they're worth anything together. The Monorepo isn't a plug-and-play decision. It's the ongoing investment that turns every project we ship into raw material for the next, harder one.